What happens to performance when the crowd isn’t human

The previous post ended with a question I can’t stop thinking about.

If social capital is increasingly built on digital signals, e.g. followers, checkmarks, engagement, reach, and if those signals can now be manufactured wholesale, who exactly is the audience for the game? And if that audience is increasingly non-human, what happens to the game itself?

Status requires witnesses who care

Here’s the thing about status games that usually goes unstated: they only work because the audience has skin in the game too.

The human brain is wired to detect and respond to hierarchical structures. It turns out that status is not just a desire but a need, closely linked to survival and social belonging. We track who’s at the top because that information was genuinely useful to our ancestors. Deference to high-status individuals, coalition formation, the careful reading of social signals, it all evolved in small, tight groups where everyone knew everyone, and everyone cared about where everyone else stood.

As researcher Will Storr documents: ‘We don’t play status games with distant people so much. We don’t compare ourselves very often to the king of Thailand or Michelle Obama. We compare ourselves to the people around us.’

The deep machinery of status is calibrated for proximity and mutual recognition. The peacock’s tail works because the peahen is watching and feeling something about it. She is not indifferent. Her response is genuine, biological, consequential and gives the signal its power.

A bot is the peahen that doesn’t care. It can be programmed to execute the gestures of caring: to like, follow, amplify, engage. But there is no felt response behind it. No anxiety about its own rank. No admiration. No envy. You cannot actually impress a bot. You can only trigger its conditional logic.

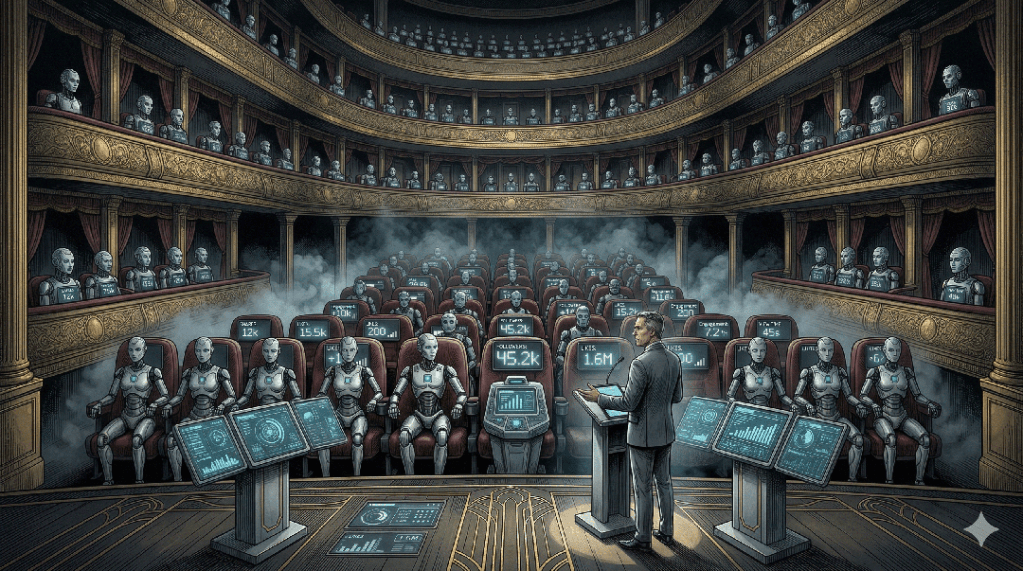

The hollow theater

Social media exploded in the mid-2000s by filling a desperate unfulfilled desire: the need for social status on the part of those who saw a high-status lifestyle as out of reach. It worked because the audience was real. The likes came from actual humans who were themselves embedded in the same anxious game, people who genuinely registered your post, compared it to their own situation, felt something. The feedback loop was emotionally live.

What happens when you can no longer tell? When more than one in three influencer accounts show a meaningful fraud problem, when the number displayed as your audience may be half fiction, the theater continues but the weight drains out of it. You’re performing in a room where an unknown fraction of the applause is automated.

This matters psychologically more than it might seem. People use social media to seek external validation and reassurance, and the likes and comments provide a temporary fix, but the positive effects are short-lived because they come from others, not from within. If those ‘others’ are increasingly not human, the fix doesn’t even land. The cycle accelerates without resolution. The hunger grows while the food becomes less real.

The Paris Hilton problem, at scale

Psychologists call it the Paris Hilton effect: humans are wired to pay attention to whoever other people are paying attention to. If enough people look at one person, we assume that person must know something we need to know. It’s a runaway attention cascade, and these processes were built for small groups, not for the age of the internet.

The cascade works because human attention is scarce and meaningful. When a lot of people look at something, that’s signal. When a lot of bots look at something, or when you simply cannot tell the difference, the cascade is poisoned at the source. The heuristic breaks. You’ve been following a signal that wasn’t signal.

The whole edifice of manufactured social proof rests on this corruption: make the cascade appear to have started, and real humans will join it. But as the ratio of synthetic to genuine attention rises, the cascade mechanism itself loses calibration. People begin, slowly, to stop trusting it. And when you stop trusting the cascade, you need another way to navigate.

What emerges

Humans do not stop needing to navigate. Status games are ultimately orientation tools. They help us figure out whom to trust, defer to, compete with, ally with. That need doesn’t disappear when the signals corrupt. It relocates.

The first is a re-primitification of presence. Physical co-presence, always the oldest and most expensive signal, reasserts its value precisely because it can’t be faked at scale. You cannot bot your way into a small room of interesting people who have known each other for years. The dinner table matters again. The local reputation matters again. Dunbar’s research suggests that humans devote about two-thirds of their social time to just fifteen people — the inner core that has always been the actual locus of real social life. The mass-audience illusion temporarily obscured this. Its collapse makes it newly visible.

The second is deliberate illegibility as status signal. Already emerging at the edges: the conspicuously minimal digital footprint, the executive whose LinkedIn is four years stale, the person who is simply unfindable. When visibility is cheap and abundant, invisibility becomes scarce. Not being on the platforms at all signals confidence that you don’t need them, which, in an attention economy hollowed by bots, may be the most credible signal available.

The third, and this one cuts close to the bone of what Notarism is doing, is imperfection as proof of personhood. AI and bots are fluent, consistent, and frictionless. They do not fumble. They do not hold a genuinely unpopular opinion at cost to themselves. They do not show up somewhere inconvenient and then get visibly tired. The glitch, the fumble, the emotion that lands wrong — these become the new watermarks of the human.

The queen bee question

So who becomes queen bee when follower counts are noise?

The answer is whoever has accumulated the most witnessed trust inside real human networks. Not the biggest audience, the most densely connected real one. The person whose fifteen people are themselves genuinely influential, loyal, and present. The person who has been in enough rooms, at enough moments that actually happened, to have a reputation that precedes them in ways no algorithm tracks.

Within groups smaller than 150, trust is personal. You know Bob. He’s reliable. Once you exceed that number, trust requires bureaucracy. The coming reassertion of small-group dynamics means that Bob, the person actually known, vouched for, witnessed, reclaims ground from the profile with the large numbers that no one can verify.

This is simple arithmetic. The signal-to-noise ratio in mass digital spaces is approaching the point where the signal is no longer worth the cost of extraction. When that happens, humans route around the noise the way they always have: by talking to people they actually know, in rooms they were actually in, about things that actually happened.

The game doesn’t end. It shrinks back to human scale.

And for those of us who were never comfortable playing it at the inflated size, who found the performance exhausting and the rewards hollow, that contraction feels less like a loss than a return.

Leave a comment