Verification in the age of infinite generation

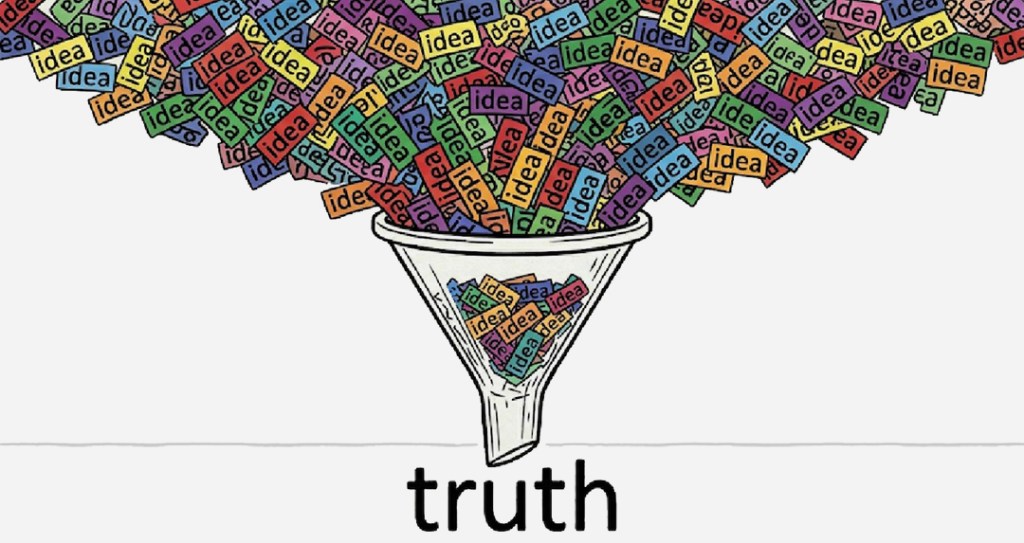

Something fundamental is shifting in how knowledge is created and how it is trusted.

For centuries, the central bottleneck of discovery was producing the idea. Insight was scarce. Theories took years to develop. Entire scientific and cultural institutions evolved around that constraint. Careers were built on the ability to generate original thought. Journals, peer review, replication norms. They all assumed a world where new ideas arrived slowly enough to be evaluated by human communities.

Today that assumption is breaking.

Generative systems can now produce candidate explanations, designs, arguments, and models at speeds no human institution was ever designed to absorb. The cost of generating plausible ideas is collapsing. Where once a researcher might develop one hypothesis over months, machines can now generate hundreds in hours.

Terence Tao, the Fields Medal-winning mathematician, recently described the shift in precise terms: AI has driven the cost of idea generation to near zero, much the way the internet drove the cost of communication to near zero. But the result is not abundance. The result is a new bottleneck. “Now we have to verify them, evaluate them,” Tao explained in a conversation at UCLA’s Institute for Pure and Applied Mathematics, where he compared the current moment to the early internet, an ocean of noise with islands of signal buried inside.

This does not mean insight has disappeared. It means the bottleneck is moving. The challenge is no longer simply how to produce answers. It is how to determine which answers are real.

We have automated creation. We have not yet automated truth.

Scientific communities are already feeling the pressure. An analysis of submissions to the International Conference on Learning Representations (ICLR 2026) found that 21% of peer reviews were fully generated by AI, with more than half showing signs of AI use; a discovery triggered when researchers at Carnegie Mellon noticed feedback that included hallucinated citations and requests for analyses unrelated to the papers being reviewed. A separate Nature survey of 1,600 academics found that more than 50% of researchers have now used AI tools while conducting peer review, often in violation of journal policies.

Meanwhile, paper mills, commercial operations that mass-produce fraudulent research articles, have industrialized the process further. A recent editorial in eNeuro described the situation as “arguably the largest science crisis of all time,” with hundreds of thousands of fake publications entering the literature annually, the rate accelerating thanks to generative AI. A comprehensive action plan, the Stockholm Declaration, was drafted at the Royal Swedish Academy of Sciences in June 2025 to address the crisis.

Peer review systems built for human-speed output are encountering machine-speed volume. Replication remains slow and resource-intensive. Attention — the ability to meaningfully examine and validate claims — is becoming the scarcest resource in the knowledge economy.

Images can be generated faster than they can be authenticated. Detected deepfake files surged from roughly 500,000 in 2023 to an estimated 8 million in 2025 — a 1,500% increase. Videos synthesized faster than they can be believed (human accuracy in detecting high-quality deepfake video hovers around 24.5%). Voices cloned faster than they can be verified. Voice cloning has crossed what one researcher called the “indistinguishable threshold,” requiring as little as three seconds of audio. Europol has estimated that 90% of online content may be synthetically generated by 2026.

The historical pattern & the new discontinuity

Some observers argue that this moment is not unprecedented. When the printing press dramatically lowered the cost of producing ideas, Europe did not drown permanently in noise. Instead, new verification structures emerged: scientific method, journals, replication norms, professional societies.

When the internet collapsed the cost of communication, it brought spam, misinformation, and overload, but also search engines, reputation systems, ranking algorithms, and new ways of filtering signal from noise.

By this reasoning, the current explosion of generative capability represents another phase shift. The bottleneck moves, and progress reorganizes around it. Verification becomes the new frontier. New infrastructures form. Selection improves alongside generation. There is truth in this view.

Civilization has repeatedly adapted to information shocks by building better filters. Bottlenecks, ironically, are where innovation concentrates. But there is also reason for caution.

The printing press accelerated human production. The internet accelerated human communication. Generative AI may represent a deeper discontinuity: the emergence of non-human rates of idea generation. The scale of output may be qualitatively different. Autonomous research loops, synthetic authorship, machine-generated experimental proposals at massive scale. These dynamics could strain verification systems in ways history only partially prepares us for.

The gap between generation and validation may point to a prolonged structural tension.

Can verification be scaled?

A central question emerges: Is verification itself fundamentally human-limited?

Optimists argue no. Just as machines now help generate hypotheses, they may also help test them. AI systems can simulate environments, cross-check claims, detect statistical anomalies, and assist in automating parts of the scientific method itself. They can serve as reviewers, replicators, and critics. Over time, layered systems of human-machine validation may dramatically lower the cost of assessing truth.

If this is correct, the real race is not between humans and AI. It is between systems of verification.

Scientific validation platforms, autonomous experiment networks, reputation graphs, provenance tracking systems, and hybrid review architectures could become the central infrastructure of the next knowledge era. The Coalition for Content Provenance and Authenticity (C2PA) is already developing cryptographic standards for content signing. Gartner has placed digital provenance among its top ten strategic technology trends through 2030. Power may concentrate not with those who produce the most ideas, but with those who build the most trusted filters.

Yet this optimism must be tempered by the realities of grounding.

Verification is not purely symbolic. Many truths must still be tested against the physical world. Experiments require time, materials, and observation. Causal claims demand longitudinal scrutiny. Institutional legitimacy evolves slowly. Even the most advanced computational verification systems may remain partially dependent on human judgment, physical constraint, and social trust.

Tao himself has acknowledged this tension. In a conversation with Renaissance Philanthropy, he described a fundamental “trust problem with AI” and stressed the need for “ways to independently verify outputs”, including training humans to identify errors, not just building machines to catch them.

The return of human anchors

As generative technologies expand, societies may develop multiple overlapping layers of truth assessment: computational validation, statistical filtering, institutional review, market-based reputation, cryptographic provenance, and cultural or symbolic forms of witnessing.

For most of history, one of the simplest verification mechanisms was not a document or a device. It was presence.

“I was there.” “I saw this happen.” “We experienced this together.”

Before journals, before digital ledgers, truth often began as a shared physical encounter. Someone observed. Someone acknowledged. Reality was grounded in mutual awareness.

In an era when mediation becomes increasingly synthetic, that instinct may regain importance.

Notarism as an artistic response

Notarism explores this possibility, not as a technological solution to large-scale verification, but as a cultural and artistic investigation.

A Notarist is physically present with another person during an action, performance, or moment. They create a symbolic artifact — a written note, a stamp, a trace — that attests not to scientific correctness or legal fact, but to experienced reality. The foundational text on the practice, “The Art of Witnessing,” describes the framework in full.

This gesture does not scale to machine speed. It cannot evaluate thousands of hypotheses or adjudicate complex datasets. It does not compete with algorithmic verification systems. Its purpose is different.

It asks whether, in a world of infinite synthetic generation, human co-presence can remain a meaningful form of validation.

A notarized artifact does not prove an idea is true. It does not guarantee authenticity in a technical sense. It does not replace institutional trust mechanisms.

What it preserves is something more elemental: a moment of shared attention. A record that two people occupied the same time and place and acknowledged that something occurred.

In a civilization increasingly mediated by generative systems, such acts may function as cultural anchors, modest reminders that not all forms of verification are computational.

The next era of discovery

If the last five hundred years were defined by the scarcity of ideas, the coming decades may be defined by the scarcity of certainty.

Progress will likely depend on how effectively societies build new filtering infrastructures; technological, institutional, and human. Machine-assisted verification will expand. New norms will form. Some existing systems will fracture and be replaced.

But alongside these scalable solutions, there may also be renewed interest in grounded, experiential forms of trust.

Creation is becoming effectively infinite. Presence is not.

Awareness cannot be parallelized. Attention cannot be mass-produced.

The future of truth may not belong to a single system. It may emerge from the interplay between computational verification at scale and human witnessing at the level of lived experience.

In that sense, the current moment is not only a crisis of knowledge. It is an invitation to reconsider how we decide what is real, together.

Leave a comment